Feature sharing between vision and tactile sensing

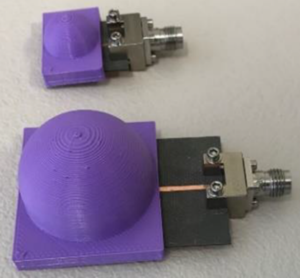

Vision and touch are two important sensing modalities for humans and they offer complementary information for sensing the environment. Our aim is to endow robots with a similar multi-modal sensing ability to achieve better perception. To this end, we propose a new fusion method named deep maximum covariance analysis (DMCA) to learn a joint latent space for sharing features through vision and tactile sensing. The features of camera images and tactile data acquired from a GelSight sensor are learned by deep neural networks. But the learned features are of a high dimensionality and are redundant due to the differences in the two sensing modalities, which deteriorates the perception performance. To solve this, the learned features are paired using maximum covariance analysis. Results of the algorithm on a newly collected dataset of paired visual and tactile data relating to cloth textures shows that a good recognition performance of greater than 90% can be achieved by using the proposed DMCA framework. In addition, we find that the perception performance of either vision or tactile sensing can be improved by employing the shared representation space, compared to learning from unimodal data.

Luo, S, Yuan, W, Adelson, E, Cohn, AG and Fuentes, R (2018) ViTac: Feature Sharing between Vision and Tactile Sensing for Cloth Texture Recognition. In: IEEE International Conference on Robotics and Automation, 21 - 25 May 2018, Brisbane Convention & Exhibition Centre, Brisbane, Australia. Further details.